Computation Thinking (Part 2):

Thinking Logically/Concurrently:

Structured Programming:

When thinking computationally, it's important to think like a computer. Part of this is the structured programming approach, where you think in terms of the following basic programming structures:

- Sequence (statements are ordered)

- Selection (making different choices based on some condition)

- Iteration (doing something repeatedly, e.g. for or while loops)

Many languages, like Python, are block-structured, meaning they operate using just these 3 control structures.

Designing Algorithms:

Using techniques like this, as well as pseudocode or flow diagrams, you can plan an algorithm before you put it to source code. This lets you become aware of potential bugs before they occur, as well as giving you a rough idea of how to code the program from the start. Other tools such as trace tables are also very useful.

Thinking Concurrently:

Concurrent computing is not to be confused with parallel computing, which has multiple processors executing instructions at the same time. Concurrent computing on the other hand is performed on a single processor, which multitasks by switching between tasks. This has multiple benefits, but als some trade-offs:

- Increased program throughput

- Waiting time is used on another task (kind of a subset of the previious point)

- On the other hand, if many programs are being run at once then the system may slow down dramatically

Problem Recognition:

Computability:

It's also important to know when a problem is even solvable, or worth solving. A computable problem is one where it's possible to create an algorithm that can solve for every case of a problem. It's worth noting though that this doesn't necessarily mean the algorithm is useful: a computationally complete algorithm may take far too long to have any practical use, e.g. cracking a secure password - you can brute-force it if you're patient, but it will take you millions of years.

Methods of Solution:

It's also useful to be able to recognise roughly how you plan to solve the problem in the long run. There are a few broad methods:

- Enumeration (aka exhaustion -- listing all possible cases)

- Simulation (Numerically solve the problem approximately if it's too complex for a complete solution, by abstracting it away to its essentials)

- Theoretical Approach (Try to avoid doing actual coding as long as possible until you've mathematically reduced the problem to a simpler case)

- Creative Solution (Thinking outside the box, such as repurposing a not obiously connected existing solution to a different problem)

Strategies for Solution:

There are also some different general strategies for solving the problem once you've got your method. Here are a few (generally you'd use these on decomposed sub-problems from a larger problem at hand):

- Divide and Conquer (split the problem repeatedly to reduce its size down to the simplest cases, e.g. merge sort)

- Problem Abstraction (Removing unnecessary details until the problem is reduced to an already solved one -- really you should be trying this with every problem you come across)

- Automation (Building models which can - at least partially - solve the problem for you)

Problem Solving:

For some reason, the last subheading, which was literally called methods of solving a problem isn't included in this chapter.

There are several useful tools to solve a problem:

Visualisation:

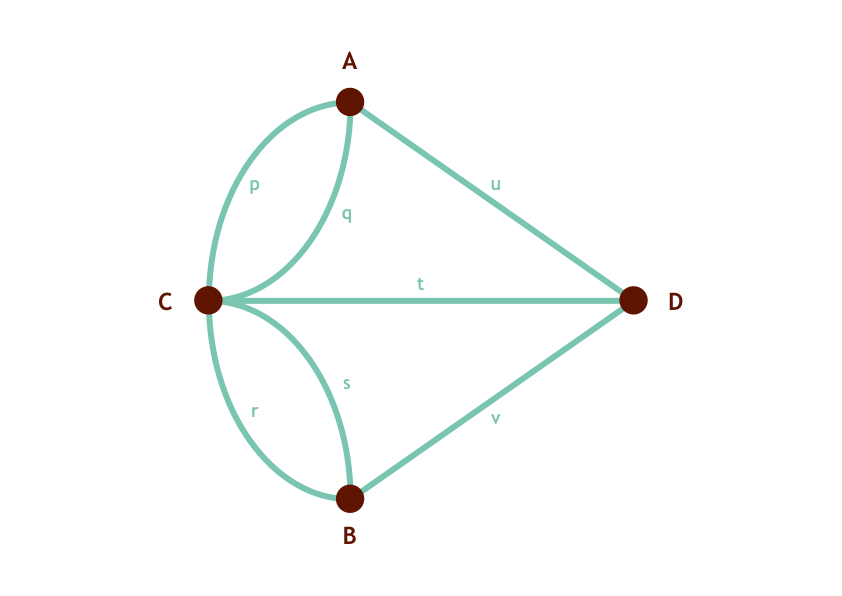

You can often learn a lot by just presenting the problem in a visually revealing way. This is part of the reason why flow diagrams and logic diagrams are so useful - they show us where we can simplify some cases and give us a general idea of the problem, such as in this visualisation of the Seven Bridges of Koenigsburg:

Data Mining:

Data mining refers to digging through large data sets in order to predict trends and build models. You may have heard of Big Data -- this is the term used for large sets of data that are too big to be handled by a traditional datasets. Statistical models are often a lot more powerful than other algorithms (especially in the advent of AI) as there isn't always a nice easy solution.

Time Complexity:

An intractable problem is a problem which can't reasonably be solved in a short amount of time. The most famous example of this is the Travelling Salesman Problem, in which the titular salesman needs to navigate the shortest route through every town in a graph while only visiting each town once.

A good way to think about this is with the power of time complexity, a concept describing how long it will take to solve a problem. Often we use 'big O' notation (e.g. O(n)), which tells you how the time needed to solve a problem will scale with size of input in the worst possible case. Sometimes we can drastically reduce the time complexity of a problem using heuristic methods, which are greedy algorithms that make educated guesses in order to generally shorten the required time. A good example of this is the A* algorithm, which despite having the same time complexity of O(N log N) as Dijkstra's Algorithm, uses an heuristic to more than double the speed at which it produces a result in most cases.

You can also speed up many algorithms using pipelining: splitting tasks up and overlapping them to reduce downtime, like an assembly line.

Performance Modelling:

Performance modelling refers to the process of simulating different amounts of load on a computer using a mathematical approximation, in order to test performance in more extreme scenarios more cheaply.